In 2012, the U.S. Defense Advanced Research Projects Agency announced the DARPA Robotics Challenge (DRC). The multi-year, multi-million-dollar competition for disaster robotics resulted in Boston Dynamics’ Atlas, some absolutely incredible moments from one of the very first generations of useful humanoid robots, and a blooper video that will live on forever.

Gill Pratt, the architect of the competition, had a very clear understanding of what the DRC was going to do for robotics. “The reason [for the DARPA Robotics Challenge] is actually to push the field forward and make this capability a reality,” Pratt told IEEE Spectrum in 2012. At the time, he pointed out that before the DARPA Grand Challenge in 2004 and the DARPA Urban Challenge in 2007, driverless cars for complex environments essentially did not exist. He saw the DRC doing the same thing for robotics.

It’s been about a decade since the conclusion of the DARPA Robotics Challenge, and many in the industry believe humanoid robots are about to have the transformative moment that Pratt predicted. But as is common in robotics, things tend to be far more difficult than it seems like they should be. Spectrum checked in with Pratt, now the CEO of the Toyota Research Institute (TRI), to find out what’s holding humanoid robotics back, what he thinks these robots should be doing (or not doing), and how to navigate the humanoid hype bubble.

What do you think about this robotics moment that we’re in?

Gill Pratt: What has changed is actually not about humanoids. Many people have been building research robots in the humanoid form for a long time. What’s different now isn’t the body, but the brain. We have always had this disparity in the robotics field where the mechanisms we were building were incredibly capable, but we didn’t really have the means for making the utility of the robot match that potential. Now we actually do, and that’s because of the AI revolution that has happened over the last few years.

It’s very tempting to look back ten years and directly credit the DRC with a lot of what is now happening with commercial humanoids. Is there any reason not to do that?

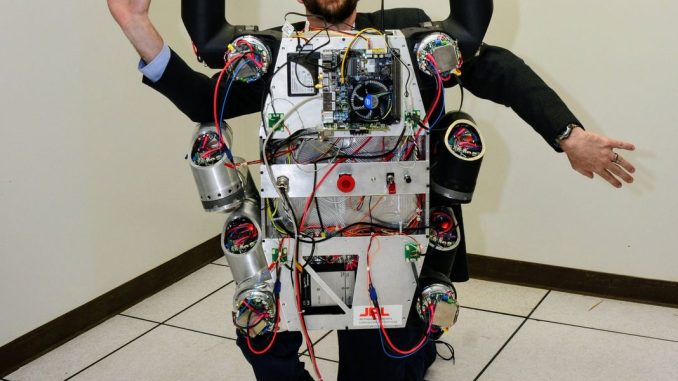

Gill Pratt poses with an early version of NASA’s Valkyrie DRC robot.Gill Pratt

Pratt: No, but I want to be humble about it. The DRC was focused on half autonomy and half teleoperation in real time. There was remote supervision, and then semi-autonomy to amplify that supervision to handle tasks in real-time while the remote person was telling the robot what to do. That was all before the breakthroughs that have happened in AI recently.

What has changed now is that we have a way to essentially teach robots what to do, and make them competent in a way that doesn’t require writing code; you can just demonstrate the task to the robot instead. With a sufficient amount of that data and new AI methods, robots can be far more performant than ever before.

But that data is a bottleneck, right? How do we know what it should consist of, and what a sufficient amount is to get a robot to do something reliably?

Pratt: This mirrors exactly the debate going on in large language models [LLMs]. You have certain people who believe that if you take LLMs—which are auto-regressive predictors that guess what the next word should be based on past words—and patch them up with a variety of methods to solve their hallucinations, we’ll eventually get to a point where we can trust the AI system. And then there are other people, and I think Yann LeCun is the most well-known of them, who say that’s nonsense, and we need something else. His view, and I agree, is that we need world models. We need some way for the AI system to imagine, try things out, and truly reason.

And I know that we’re applying words like ‘reason’ to what are essentially pattern-matching systems. Saying that there’s ‘reasoning’ is just a sticker we put on whatever we’ve built; it’s not true reasoning.

This is an example of ”system one” versus “system two” thinking, right?

Pratt: Yes. System one is the fast, reflexive thinking we have, which is the kind of pattern matching that current LLMs do. System two is the slow reasoning that involves imagination and world models. That’s what we have not done yet. Progress on system one has been extraordinary, but we still don’t have system two. These attempts to patch system one to make it system two are like trying to squeeze a balloon filled with water; you squeeze it on one side and the water bulges out on the other side. You keep getting surprised that you fix one thing and something else breaks, and the performance overall doesn’t really get that much better.

How have you been approaching this problem at TRI?

Pratt: Two years ago, we came up with diffusion policy, and then we came up with what I call large behavior models (LBMs). That involves having one model trained on many tasks, and showing that as you add each task, it actually helps with the other tasks and cuts down on the amount of training data needed to reach a given level of performance. These have been incredible system one advances.

The breakthrough happened when we realized that diffusion could be applied to robot behavior. We discovered that operating in the behavior space, from vision in, to action out, worked incredibly well. That kicked off the whole field, and since then, I think every robotics demonstration that we’ve seen is using some form of diffusion policy to do what it’s doing. But again, this is system one pattern matching: ‘If I see the world like this, I act on the world like that.’ The robot’s not imagining, thinking, and planning the way traditional robotics with hand coding used to do. It’s just reacting.

System one’s pattern matching often breaks down in the real world, though, as we’ve seen with autonomous driving’s struggles.

Pratt: Ten years ago, when TRI first started, almost everybody was saying that automated driving was right around the corner.

Ten years later, I do think we are now there, and the remaining questions are business ones: How much does the hardware cost, the insurance, the support, does it economically make sense? We haven’t necessarily solved automated driving, but our solutions are good enough, because we use humans for backup. When an automated vehicle gets stuck at a double-parked car, it calls home and asks a person for a system two decision. I think other robots could do that also. Most of the time they do their work on their own, and every once in a while, they raise their hand for help.

If we’ve just barely managed to get autonomous cars right, why are we devoting so much attention to the legged humanoid form factor?

Pratt: We’ve built the world with physical affordances for our bodies. If the robot is to do well in that world, it should have something that takes advantage of those affordances. It’s also easier for imitation learning to work because we have the same form. And legs are good for certain environments; you can step over obstacles to balance faster than you can roll to a new point of support with wheels. Having said all that, legs are not always the most practical thing. It’s very weird to see so much focus on legged robots in factories, which are flat environments perfectly suited for wheels.

Managing the Humanoid Robotics Hype

Do you think that the amount of money being poured into legged humanoids is a good thing for robotics?

Pratt: It has both advantages and dangers. It’s wonderful seeing so many resources into the robotics field, and I do think that something special has occurred. Things are not the way they were before, and there are so many possibilities when you think about people teaching robots how to do things.

What kinds of things should humans be teaching robots to do?

Pratt: For ten years at TRI, we’ve been thinking about society and aging. It’s not just about physical disability; it’s about loneliness and loss of purpose, which are far more prevalent (and far worse) problems. And so the question is, what can we do technologically to help people feel that they’re younger?

At TRI, we’re exploring “care-receiving robots”—robots that receive teaching from a human. We have evolved to be creatures that love giving and love helping. When you program a machine by demonstration, and that machine goes on to help someone else, you feel a sense of purpose. We think robots can be bi-directional things to improve quality of life psychologically, not only physically.

When you started TRI ten years ago, I asked you what you would be focusing on, your answer really stuck with me: you said elder care, because ‘we don’t have a choice.’

Pratt: Yes. The statistics in Japan and the U.S. are only getting worse, and we don’t have a choice. It’s important to remember that an aging society has a huge impact on young people. This is because of the dependency ratio, which is how many young people in the workforce are supporting both people that are too young to work, and also people that are too old to work. Those numbers keep getting worse and worse.

How do we solve this?

Pratt: We’ve had some incredible breakthroughs with system one, but it doesn’t mean the robots are going to be doing all that much, unless somebody makes a system two breakthrough also. Or, where we have a system where humans provide some level of system two supervisory control.

That kind of human supervisory control takes us right back to the DRC, doesn’t it?

Pratt: [Laughs] That’s exactly right! Look, I’m not going to tell you not to praise the DRC… There was someone who called it the ‘Woodstock of Robots,’ which just warmed my heart, that was so cool!

So, ten years later, how do you feel about the amount of hype in humanoid robotics right now?

Pratt: We are approaching what (I hope!) is a peak of inflated expectations for humanoids. And that’s because nobody’s thinking deeply enough about the system one versus system two thing.

Right now, our physical AI systems are just pattern matching. They’re incredibly capable, and it’s astonishing how good these things are—we are so proud of it. And we do believe that aggregating learning from many tasks through large behavior models will be incredibly effective. But it’s still not system two. There’s a lot of overpromising going on, and it’s very sad because it’s setting us up for a fall. What I’m worried about is the trough of disillusionment that will follow.

How do we avoid that crash in robotics when the humanoid hype bubble bursts?

Pratt: For now, we need damping. In control systems, you stabilize an unstable system by adding damping. The press and the academic world can add lead compensation by reminding everyone that what we’re seeing in humanoids now isn’t really reasoning.

We should also remember that the automated driving field went through a bubble burst also, and just a few companies survived that by keeping the hype down and being persistent. I think we should do that here, too.

From Your Site Articles

Related Articles Around the Web